HuMMan v1.0: Reconstruction Subset

[Go back to Home]

HuMMan v1.0: Reconstruction Subset (HuMMan-Recon) consists of 153 subjects and 339 sequences. Color images, masks (via matting), SMPL parameters, and camera parameters are provided. It is a challenging dataset for its collection of diverse subject appearance and expressive actions. Moreover, it unleashes the potential to benchmark reconstruction algorithms under realistic settings with commercial sensors, dynamic subjects, and computer vision-powered automatic annotations. We also provide textured meshes reconstructed using a classical pipeline from multi-view RGB-D images.

Download links and a toolbox can be found here.Please contact Zhongang Cai (caiz0023@e.ntu.edu.sg) for feedback or to add benchmarks.

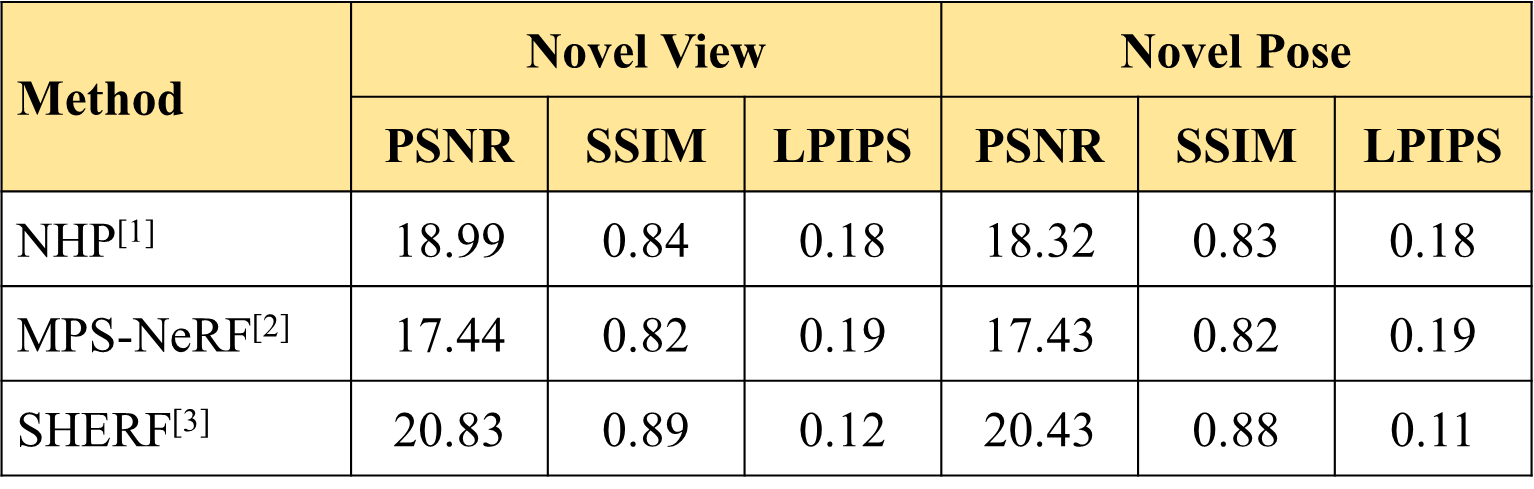

Benchmark: Generalizable Animatable Avatar from Single Image

[2] MPS-NeRF: Generalizable 3D Human Rendering from Multiview Images

[3] SHERF: Generalizable Human NeRF from a Single Image